TL;DR

ChatGPT drives 10% of Vercel's new signups—conversational search is gaining real ground, fast

AI Overviews appear in 16%+ of Google searches, higher for commercial queries

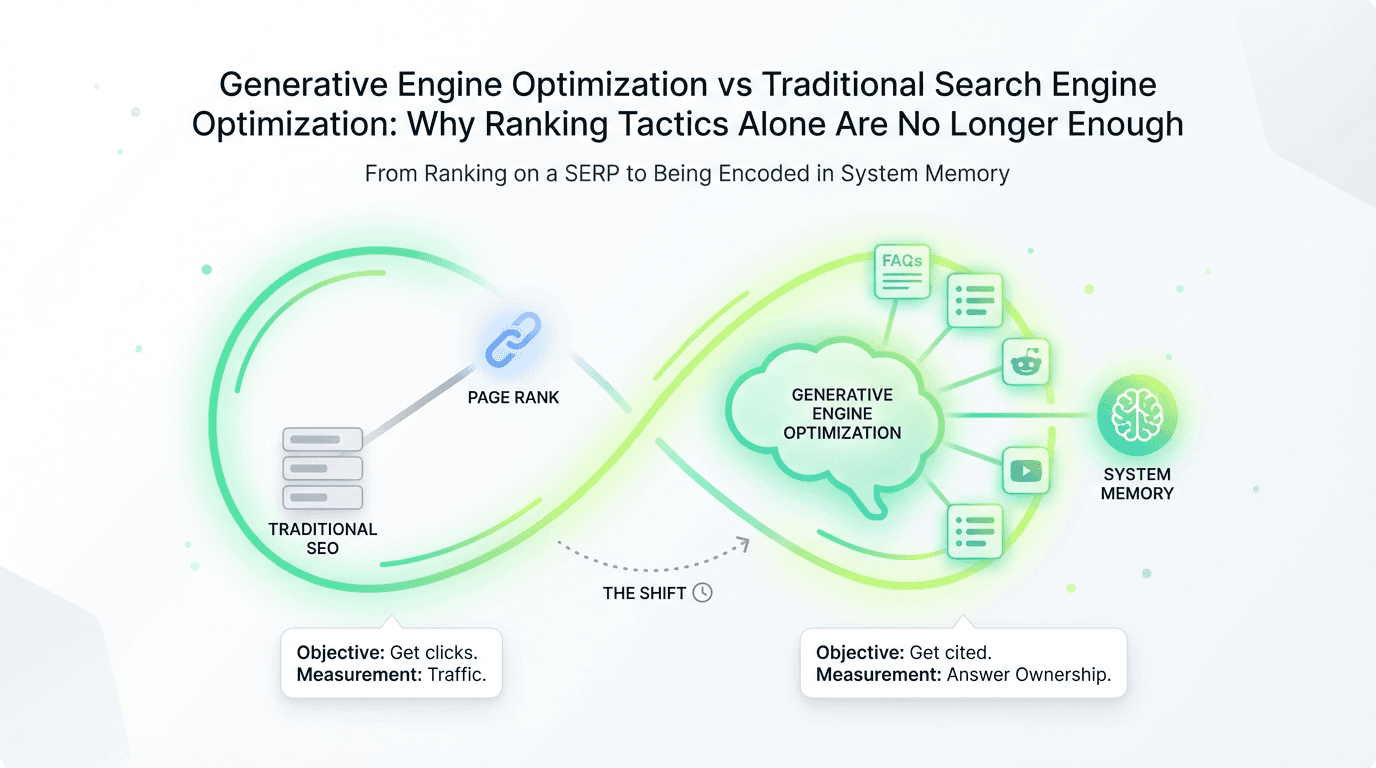

Traditional search engine optimization optimized for ranking pages; Generative Engine Optimization optimizes for encoding knowledge

The core principles didn't change—authority, clarity, structure still matter—but the objective did: from traffic acquisition to answer ownership

Execution framework: Structure for extractability, build entity clarity across digital platforms, earn authority signals that transfer to machine learning systems, measure citations and share of voice

Strategic shift: Marketing strategies move from volume to extractability; distribution becomes multi-platform by necessity; system memory becomes a competitive moat

Bottom line: If traditional optimization was about earning machine trust, the future is about earning system memory—businesses that understand this shift will own the next decade of organic discovery

Last month, Vercel's CEO dropped a stat that should make every growth operator pause: ChatGPT now drives 10% of their new signups. Six months ago, it was less than 1%.

The Semrush 2026 AI Overviews study found that machine-generated responses now appear in at least 16% of all Google searches—significantly higher for comparison and high-intent commercial queries. Meanwhile, research from Princeton demonstrated that structured optimization techniques can boost website visibility in generative engines by up to 40%. The $850M market projection for 2026 isn't hype—it's the market pricing in a fundamental shift in how discovery works.

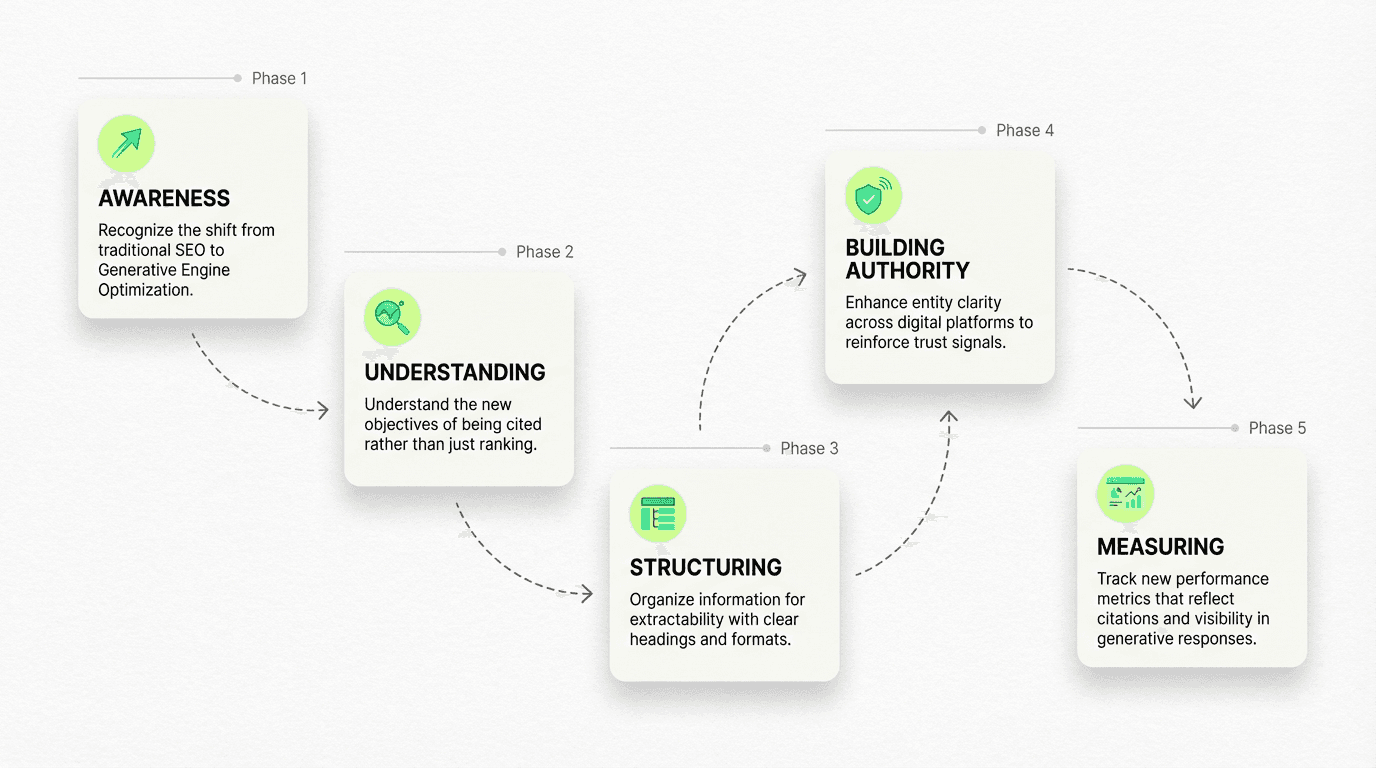

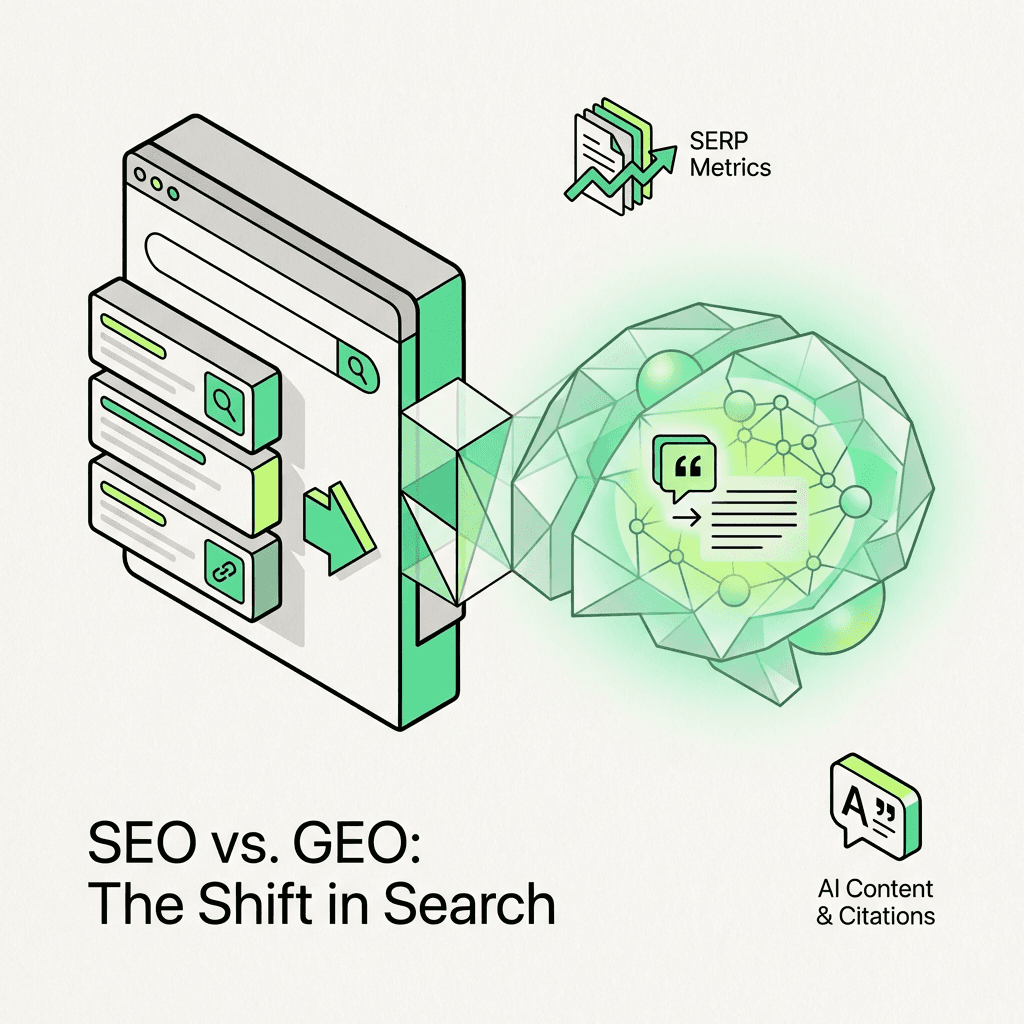

The debate is framing the question wrong. This isn't about choosing one over the other. Generative Engine Optimization is the practice of structuring information so machine learning systems can easily parse, synthesize, and cite it when generating answers—shifting the goal from ranking on search results to being encoded in system memory. This approach reveals what search engine optimization always was underneath—a system for making your expertise legible to machines by aligning with how search engines work. The machines just got smarter.

I've spent the last few years helping B2B SaaS companies scale through organic strategies, watching the playbook that worked for a decade start to crack. The shift isn't that "optimization is dead"—the objective changed. We're moving from optimizing to rank on a SERP to optimizing to be cited in a synthesis. From traffic acquisition to answer ownership. From page rank to system memory.

The companies that execute on this now will own the next decade of organic discovery. The ones that don't will keep optimizing for a game that's already changed.

The Surface Changed, The Principles Didn't

Most marketers are still playing by 2019 rules.

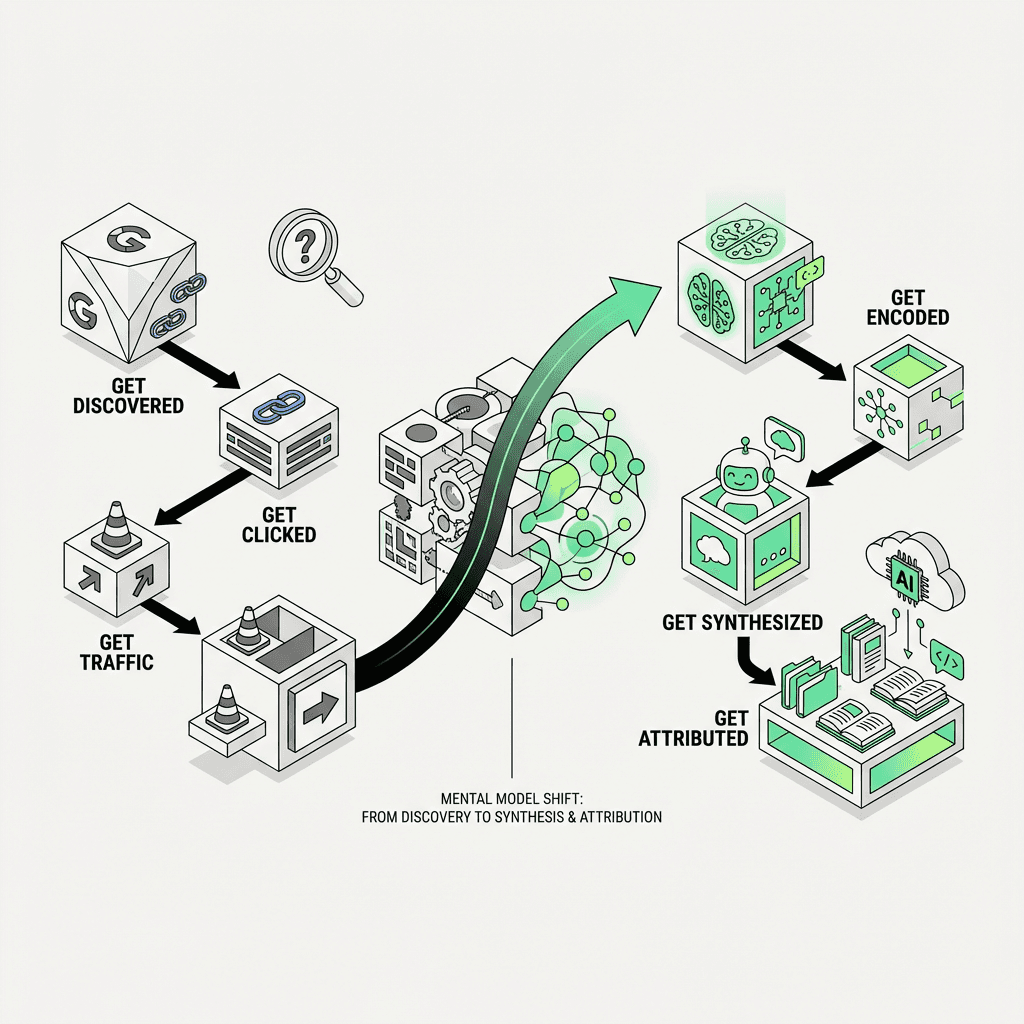

Traditional search engine optimization taught us to optimize for algorithms that rank pages. You built authority through backlinks, stuffed keywords into title tags, and measured success by where you appeared on page one. The mental model was simple: get discovered → get clicked → get traffic.

Generative Engine Optimization optimizes for systems that encode knowledge. You build authority through entity relationships, structure information for extractability, and measure success by how you're cited when conversational search engines synthesize answers. The new mental model: get encoded → get synthesized → get attributed.

The underlying principles—trust signals, clear positioning, and extractable formatting—didn't change. What changed is the surface layer. Instead of ranking on a SERP, you're optimizing to be remembered by a system. And that requires a different approach.

Consider the data: Conversational queries average 23 words compared to Google's 4-word standard. Users aren't typing "project management software"—they're asking "What's the best project management tool for a remote team of 15 with complex dependencies?" That's not a keyword. That's a question that requires synthesis and understanding user intent—a pattern that triggers query fan out in seo in modern systems.

When ChatGPT or Perplexity answers that question, it doesn't return ten blue links. It synthesizes information from multiple sources and cites the ones it deems most relevant, structured, and trustworthy. Your job isn't to rank #1 anymore. Your job is to be one of the sources the system chooses to cite—and to control how you're framed in that answer—so you consistently show up ai answers.

Why Traditional Tactics Break Down

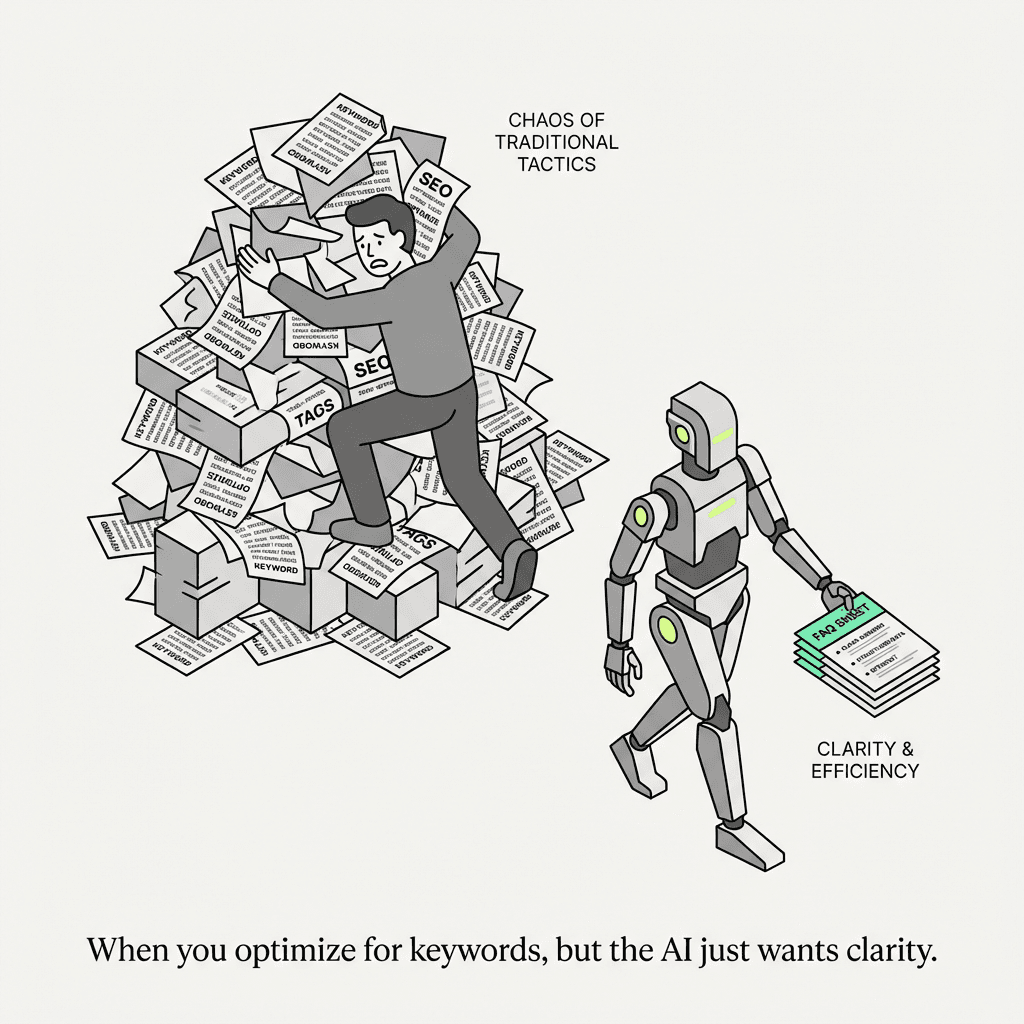

The tactics that worked for traditional optimization aren't dead—they're incomplete.

Keyword-stuffed material optimized for ranking algorithms fails in the machine learning era because modern ai content seo prioritizes extractability over density. A 3,000-word blog post optimized for "best CRM software" might rank well on Google. But if it's not structured with clear headings, self-contained paragraphs, and FAQ-style answers, a generative engine will skip it in favor of resources it can easily parse and cite.

Backlink obsession was always a proxy for authority. In this evolving landscape, authority is encoded differently. Systems look at entity relationships—how you're mentioned across platforms (Reddit, YouTube, industry publications), how you're associated with specific problem spaces, and whether you're consistently cited in high-trust contexts. A single backlink from TechCrunch matters less than being mentioned in 50 Reddit threads where your target users are asking questions.

Traffic-first mentality breaks down when conversational assistants answer the question without sending a click. If ChatGPT tells a user "Notion is best for small teams; Asana is better for complex projects," and the user never visits your website, your traffic metric is blind to the influence you just had. Revisit your seo kpis framework to capture this. You were cited. You shaped the decision. But you'll never see it in Google Analytics.

40-60% of cited sources in generative responses change month-over-month. The game is volatile, but patterns are emerging. The sources that get cited consistently are the ones that understand the new rules and focus on quality over quantity.

Dimension | Traditional Approach | Generative Engine Optimization |

|---|---|---|

Objective | Rank on SERP | Get cited in synthesis |

Authority Signal | Backlinks | Entity relationships + cross-platform mentions |

Format | Keyword-optimized long-form | Structured, extractable (FAQs, bullets) |

Measurement | Rankings, traffic | Citations, share of voice, sentiment |

Discovery | Crawl → index → rank | Retrieve → synthesize → cite |

Why Don't Traditional Tactics Work for Conversational Search?

Machine learning systems don't just look for keyword density or backlink counts. They evaluate whether your information can be extracted cleanly, whether your brand has clear entity relationships across digital platforms, and whether you're consistently cited in high-trust environments. A perfectly optimized blog post that's unstructured and isolated on your domain will lose to a well-structured FAQ page that's reinforced by Reddit threads, YouTube demos, and third-party case studies. Featured snippets, a structured data strategy, and schema markup enhance this extractability.

The New Mental Model: From Page Rank to System Relevance

If traditional optimization was about earning machine trust, the shift is about earning system memory and understanding context.

Understanding the shift is one thing. Executing on it requires a different operating system. Machine learning engines don't just crawl and index pages—they retrieve information from across the web, synthesize it, and cite based on relevance, structure, and trust signals. This is what generative optimization actually means: not just ranking, but encoding.

How Conversational Search Engines Discover and Cite Information:

Discovery Layer: Systems retrieve information from across the web—not just your website. They pull from Reddit threads, YouTube demos, industry publications, review sites. If you're not present where your audience asks questions, you're invisible to the engine—use ai search competitor analysis tools to find the gaps.

Evaluation Layer: Engines assess citability based on three factors:

Attribution Layer: Systems cite based on relevance, trust signals, and how you're framed in their knowledge graph. The question shifts from "Do I rank?" to "Am I cited? How am I framed? What context triggers my mention?" And are we tracking brand visibility ai search over time? If generative engines consistently associate your brand with a specific problem space, that's durable. System memory becomes a competitive moat and drives long-term success.

For teams building growth systems—whether through agents that monitor citations (an ai seo agent), workflows that optimize for extractability, or unified platforms that connect research to execution—this shift requires rethinking the entire engine and adapting strategies for the future.

The Execution Framework

The strategic case is clear. Executing on it requires specific changes to how you create, structure, and distribute information across the evolving digital landscape.

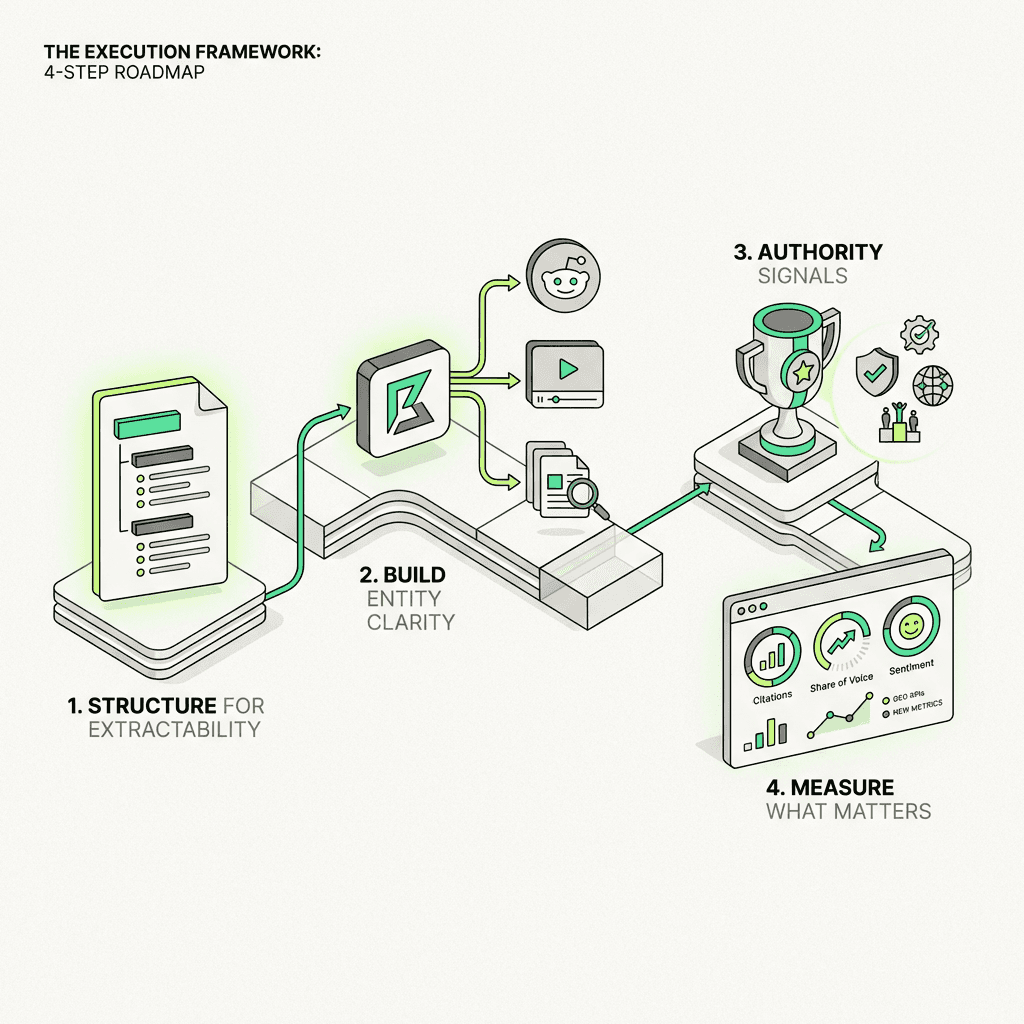

1. Structure for Extractability

To optimize for ChatGPT and other conversational search platforms, structure information for extractability first. Write in self-contained paragraphs. Machine learning systems extract specific passages, not full articles. Every paragraph should make sense on its own and demonstrate understanding of natural language processing.

Before (poorly structured):

"Our platform helps teams collaborate better. We offer a range of features including real-time editing, version control, and integrations. Many companies use us because we're easy to set up and our pricing is flexible."

After (extractable):

"Acme is a collaborative workspace platform designed for remote teams of 10-50 people. The platform combines real-time document editing, automatic version control, and 50+ integrations with tools like Slack and Asana. Setup takes less than 10 minutes, and pricing starts at $8/user/month."

Use clear headings to signal which section answers which question. Prioritize structured formats—FAQs, bullet points, comparison tables, featured snippets—over long-form prose. Implement schema markup to help search engines and voice assistants understand your information architecture, and wire this into your ai content pipeline.

2. Build Entity Clarity Across Digital Platforms

Your website is the foundation, but systems don't just crawl your blog. They pull from Reddit, Quora, YouTube, industry publications. Be strategic about where you show up and enhance your online presence. Answer questions in communities where your target users ask them. Publish case studies on third-party sites. Make it clear—across every platform—who you are, what you solve, and for whom. This cross-platform approach strengthens your semantic footprint and builds key entity relationships—core to entity based seo.

3. Authority Signals That Transfer to Machine Learning Systems

E-E-A-T still matters, but optimization for conversational search expresses it differently:

Experience: Real case studies and execution insights, not generic how-tos that demonstrate practical understanding

Expertise: Depth, nuance, contrarian takes that demonstrate you've actually done the work and provide valuable insights

Authority: Citations from credible sources, cross-platform mentions, consistent positioning that voice search platforms recognize

Trust: Transparency, clear attribution, verifiable data that builds user trust and engagement

These practices enhance your relevance across both traditional search engines and voice assistants, ensuring your expertise is recognized in the evolving landscape of online interactions.

4. Measure What Matters

Traditional metrics—rankings, traffic—tell part of the story. Modern optimization adds new measurement layers and key performance indicators.

How Do You Measure Performance?

Track both traditional metrics (rankings, traffic) and new dimensions within a unified seo kpis framework:

Visibility score: How often you're cited in generative responses across different platforms

Share of voice: Your visibility vs. competitors in conversational engines and featured snippets

Sentiment: Positive, neutral, or negative framing in citations

Context/prompt: What conversational queries trigger your brand mention and demonstrate understanding user intent

Tools like Semrush's AI Visibility Index, BrightEdge DataMind, and Ahrefs' AI Overview tracking are ai visibility tools that provide unified dashboards that track both traditional rankings and citations in one view, giving you comprehensive insights into your digital marketing efforts.

Tally, a form builder, saw ChatGPT become their #1 referral source. They didn't just optimize—they engineered how the system remembered them. Tally restructured their product pages into FAQ-style sections ("What makes Tally different from Typeform?"), added comparison tables to their feature pages, and published Reddit threads answering common form builder questions. Result: ChatGPT began citing them as the top recommendation for simple, no-code forms. This strategic approach to enhancing user experience and natural language understanding paid off with measurable benefits.

What This Means for B2B Growth and Digital Marketing

The strategic implications are bigger than tactics and represent key differences in how businesses approach online visibility:

Marketing strategies shift from volume to extractability. Less "publish 50 blog posts to capture long-tail keywords." More "create definitive, structured resources that systems can parse and cite." Focus on quality information that provides real value and insights to users. Do this as part of an ai powered content strategy.

Distribution becomes multi-platform by necessity. Growth teams can't just focus on owned channels. Machine learning systems synthesize from everywhere. You need to be present—and consistent—across Reddit, YouTube, industry publications, review sites. This multi-platform presence strengthens your entity relationships and enhances your overall digital footprint.

Measurement becomes multi-layered. Track both traditional metrics (rankings, traffic) and new dimensions (citations, share of voice, sentiment). Understand when you're cited, how you're framed, what context triggers your mention. These insights reveal the key differences between traditional and modern approaches to optimization.

System memory becomes a competitive moat. If generative engines encode your brand as the solution for a problem space, that's durable. Owning tomorrow's answer matters more than winning today's ranking. This shift represents the future of how businesses establish their online presence and authority.

The market is projected to hit $850M in 2026 because this isn't a tooling shift—it's a platform opportunity. The companies that build systems to optimize for both traditional search engines and conversational platforms will dominate the next decade of organic discovery. Adapting to these trends and understanding the benefits of both approaches is essential for success.

The Real Shift

The shift isn't about abandoning what worked. The core principles—trust signals, clear positioning, structured information—remain. What changed is the objective. Instead of optimizing to rank on a SERP, you're optimizing to be encoded in a system's memory. Instead of competing for clicks, you're competing for citations and establishing your presence in conversational search results.

This evolution isn't about choosing one over the other. Both matter. But if you rank and generative engines don't cite you, you're invisible to the next generation of search. The key is understanding the differences between traditional and modern approaches, recognizing the benefits of each, and implementing best practices that work across both landscapes. Make this the core of your ai marketing strategy.

The machines got smarter through natural language processing and semantic understanding. The game got more complex, requiring new techniques and strategies. And the teams that understand this distinction—who focus on user intent, voice search optimization, featured snippets, schema markup, and cross-platform entity relationships—will own the next decade of organic discovery and digital marketing success.

FAQs

What is Generative Engine Optimization (GEO)?

Generative Engine Optimization (GEO) is the practice of structuring and distributing information so AI systems (e.g., Google AI Overviews, ChatGPT-style assistants) can retrieve it, synthesize it, and cite it in generated answers. Unlike traditional SEO, the goal is less "rank a page" and more "be a trusted source in the answer."

What's the difference between GEO and traditional SEO?

Traditional SEO primarily optimizes for SERP rankings and clicks (crawl → index → rank). GEO optimizes for citability and knowledge encoding (retrieve → synthesize → cite), emphasizing extractable structure, entity clarity, and authority signals that transfer across platforms.

Is GEO replacing SEO?

No—GEO extends SEO. You still need strong technical fundamentals, clear topical relevance, and authority, but success is increasingly measured by citations and visibility inside AI-generated responses, not just rankings and sessions.

Why do "ranking tactics" alone break down in conversational search?

Generative systems can answer without a click, so traffic and rank don't fully reflect influence. They also prefer content that's easy to extract (clear headings, self-contained paragraphs, lists/FAQs) and sources reinforced by consistent mentions across the web—not just a single well-optimized page.

How do AI Overviews and chat-based assistants choose what to cite?

They tend to cite sources that are (1) directly relevant to the question, (2) structured so passages can be pulled without losing context, and (3) supported by trust/authority signals (credible references, consistent entity relationships, and corroboration across multiple sites and communities).

What does "structure for extractability" mean in GEO?

It means writing so individual passages can stand alone: crisp definitions, short paragraphs, descriptive headings, bullets, and comparison tables where appropriate. Adding FAQ-style sections and (when relevant) schema markup can improve machine readability and reduce ambiguity.

What are "entity relationships," and why do they matter for GEO?

Entity relationships are the consistent associations between your brand and specific topics, problems, categories, and peers across the web (e.g., being repeatedly mentioned alongside a use case or competitor set). Strong, consistent entity signals help systems place you correctly in their knowledge graph and recall you in the right contexts.

How should teams measure GEO performance if clicks decline?

Track citations/mentions in AI answers, share of voice versus competitors, and sentiment/framing (positive/neutral/negative), alongside traditional metrics like rankings and qualified organic conversions. A practical approach is to monitor which prompts trigger your brand and whether you're cited as a primary source or a throwaway mention.

What's the fastest way to start implementing GEO on an existing SEO program?

Start by updating your highest-intent pages: add a tight definition section, an FAQ block, and a comparison/decision section aligned to real buyer questions. Then reinforce the same positioning off-site (industry publications, community Q&A, YouTube demos, reviews) so the system sees consistent signals across platforms—Metaflow's frameworks on structuring for AI answers can help operationalize this without abandoning classic SEO fundamentals.

What does "answer ownership" mean for B2B SaaS?

Answer ownership means your brand becomes one of the default cited sources when prospects ask high-intent questions (comparisons, "best for X," implementation, pricing/ROI). The moat is durable system memory: repeated, accurate citations that shape how the market understands your category and where you fit—something Metaflow emphasizes by combining extractable content with cross-platform distribution and measurable citation visibility.