TL;DR:

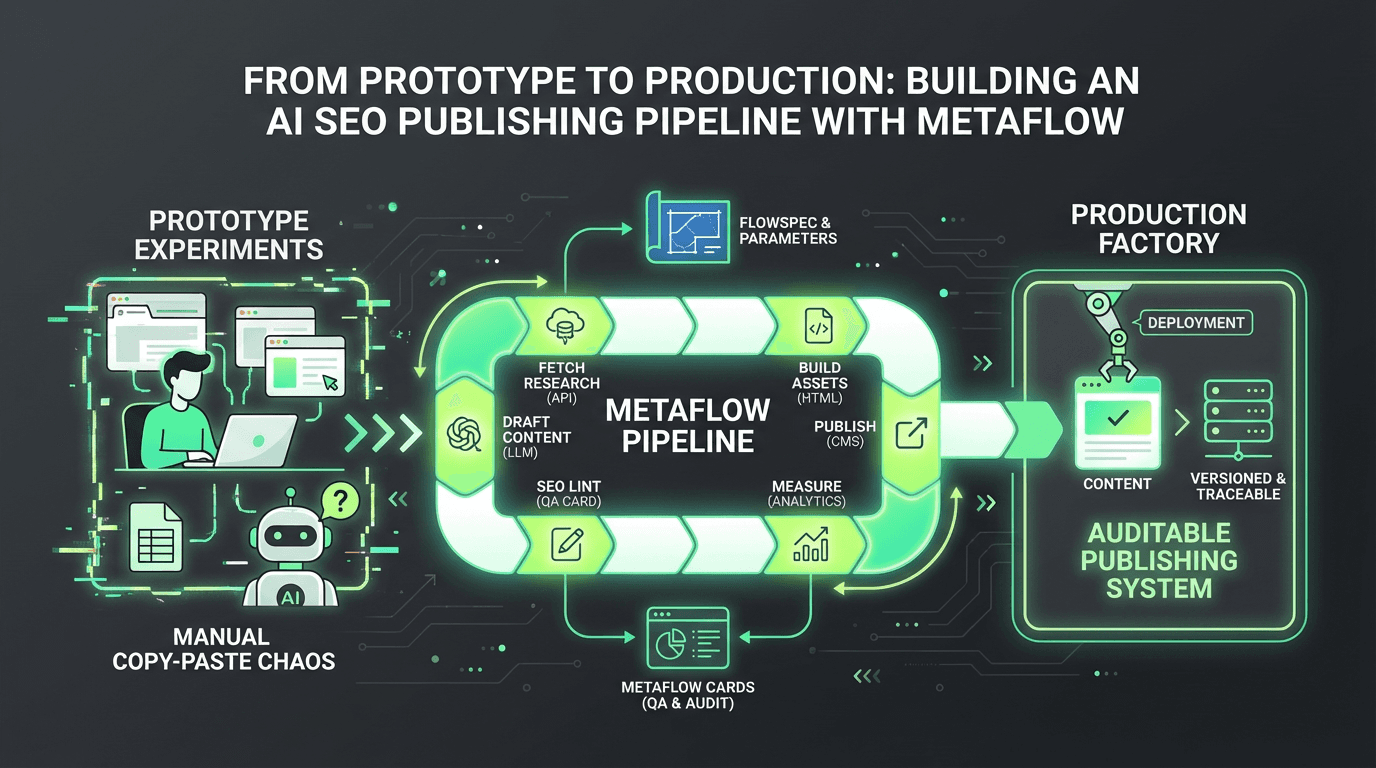

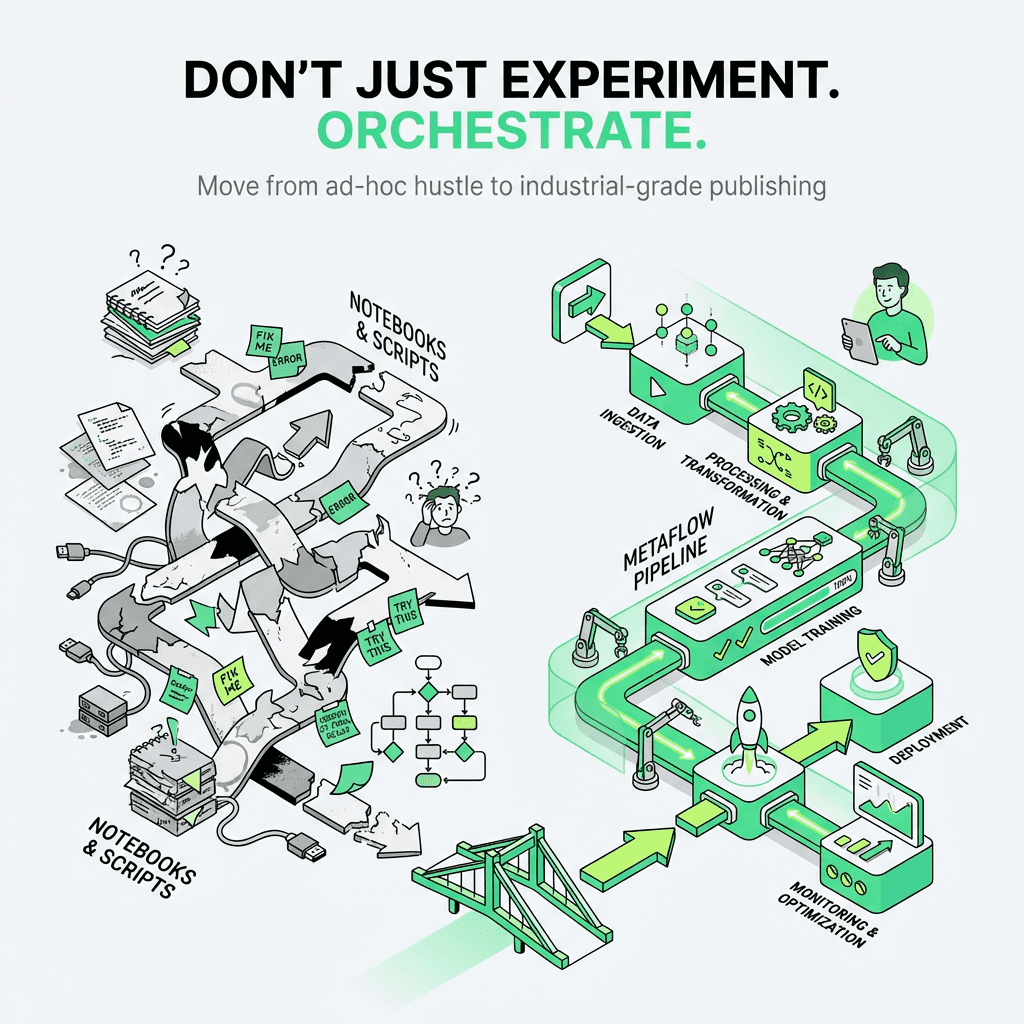

Metaflow transforms ad-hoc machine learning experiments into production-grade, reproducible workflows with full version control and audit trails for data science teams

Metaflow FlowSpec defines your publishing system as a DAG of steps; Metaflow Parameter objects enable dynamic, reusable campaigns without code changes

Step decorators (`@retry`, `@timeout`, `@catch`, `@card`) add resilience, error handling, and human-readable QA reports to each stage for debugging

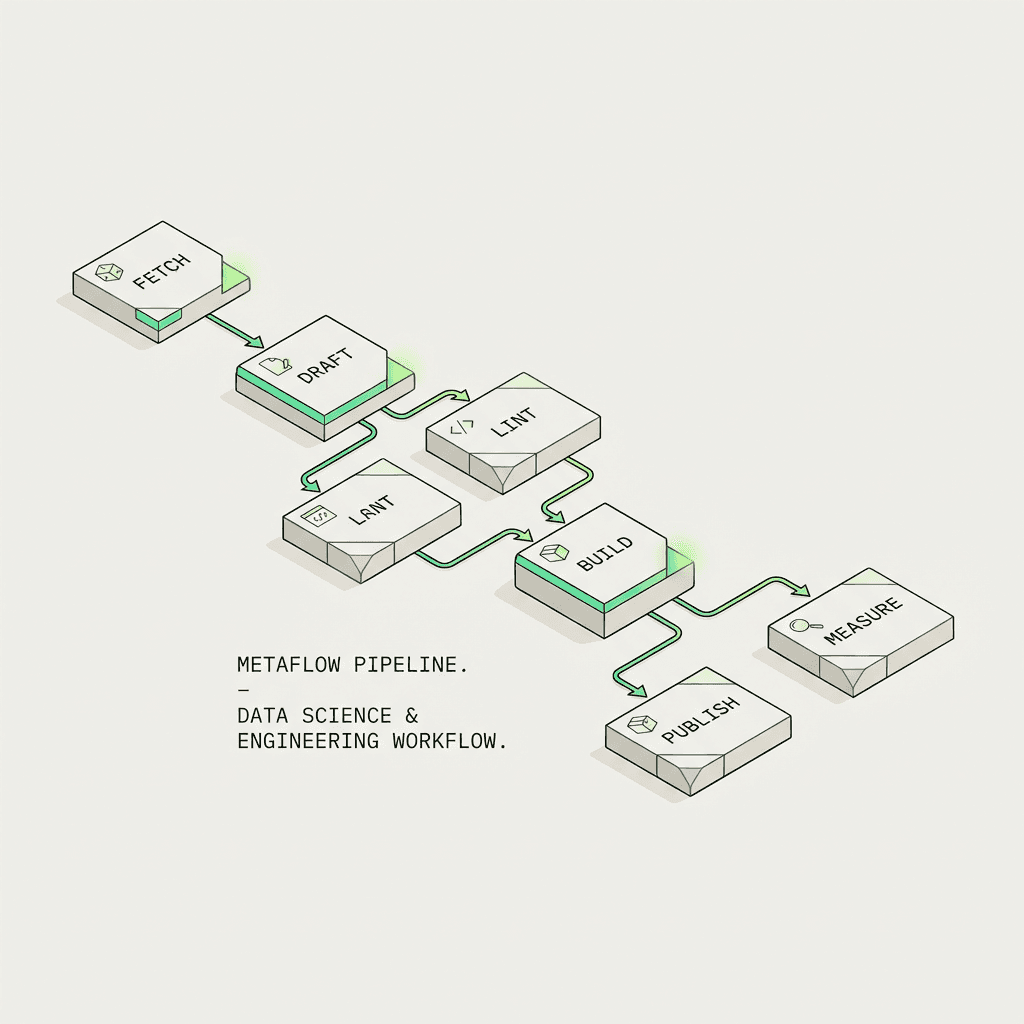

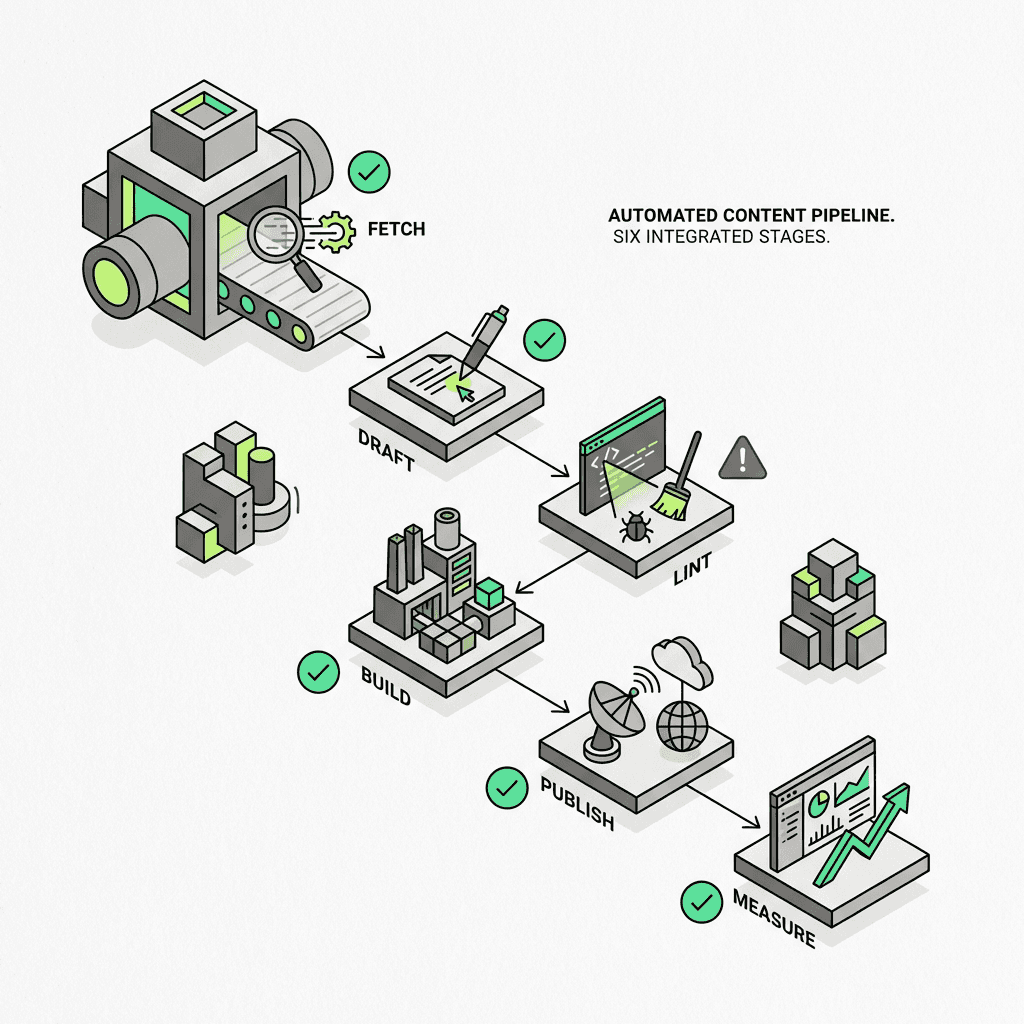

Build end-to-end automation pipelines: research→draft→optimization lint→build→deployment→measure, with every artifact versioned and traceable in the datastore

Metaflow cards generate visual dashboards for non-technical stakeholders to review scores, keyword density, and validation gates before deployment with metadata tracking

Unlike notebooks or scripts, Metaflow provides reproducibility, observability, and collaboration using Python code—turning "we use ChatGPT sometimes" into "we have an auditable publishing factory with AWS cloud integration"

Integrates with existing SEO automation tools (Ahrefs, SEMrush, WordPress, analytics) as the orchestration layer with API integration that ties your stack together

Real-world use cases: programmatic optimization at scale with batch processing, automated refreshes using data science, multi-channel publishing with parallel execution, compliance workflows with manual review gates for MLOps

The infrastructure framework that makes AI SEO agents and AI marketing agents production-ready for deployment on Kubernetes—not fragile prototype experiments

If you've ever found yourself manually copying prompts between ChatGPT tabs, juggling spreadsheets of keywords, and wondring whether your last blog post used the good prompt or the old prompt, you're not alone. Most marketing teams live in this liminal space between "we use AI sometimes" and "we have a system." The gap isn't capability—it's infrastructure.

Enter Metaflow: a production-grade orchestration framework that transforms ad-hoc AI experiments into reproducible, auditable workflows. Originally built at Netflix to manage machine learning at scale, Metaflow has quietly become the secret weapon for growth teams who need to ship AI-powered publishing systems without losing their minds—or their audit trail.

This isn't about replacing human creativity. It's about building a content automation system that handles the tedious, error-prone work—research, SEO validation, formatting, publishing—so your team can focus on strategy, storytelling, and the kind of high-leverage decisions that actually move metrics.

By the end of this guide, you'll understand how to turn every blog post into a versioned, traceable process using Metaflow FlowSpec, Metaflow Parameter objects, step decorators, and Metaflow cards. You'll see how to orchestrate research→draft→SEO lint→publish→measure with human-readable QA reports at every gate. And you'll learn why this approach is the foundation for every serious AI SEO agent deployment, and how it enables the next generation of ai marketing automation platform solutions.

The Problem: AI Content Tools Are Powerful, But Fragile

The promise of AI for SEO is intoxicating. Generate outlines in seconds. Draft long-form articles with a single prompt. Optimize meta descriptions at scale. But the reality is messier:

Prompt drift: Your best-performing prompt lives in a Slack thread from three weeks ago.

No version control: You can't reproduce last month's winner because you tweaked the temperature mid-campaign.

Manual handoffs: Someone still has to copy-paste from Claude to WordPress, then remember to update the sitemap.

Zero auditability: When a post underperforms, you have no idea which stage failed—the research, the draft, the keyword targeting?

Traditional SEO automation tools handle parts of this—keyword research, rank tracking, backlink monitoring—but they don't orchestrate the end-to-end publishing process. You're left stitching together Zapier chains, Google Sheets, and prayer.

Metaflow solves this by treating each piece of published work as a parameterized, versioned DAG. Every stage—from keyword extraction to final deployment—is a discrete, testable unit. Every artifact—the research JSON, the draft Markdown, the validation report—is stored and traceable. If something breaks, you know exactly where. If something works, you can reproduce it tomorrow, next quarter, or next year.

What Is a Metaflow Pipeline? Core Concepts Explained

Before we build, let's define terms. A Metaflow pipeline is a directed acyclic graph (DAG) of steps that transform inputs into outputs. Think of it as a recipe, but one that remembers every ingredient, every temperature adjustment, and every taster's feedback.

Metaflow FlowSpec: The Blueprint

Every orchestration inherits from `FlowSpec`. This is your base class—the container for all logic. A workflow is just a Python class with decorated methods:

Each `@step` decorator marks a stage in your orchestration. Metaflow handles execution order, data passing, retries, and logging. You just write the business logic.

Metaflow Parameter: Dynamic Inputs

Hard-coded systems are brittle. Metaflow Parameter objects let you inject configuration at runtime—target keyword, brief URL, publish date, tone of voice:

Now you can run the same orchestration for different campaigns without touching code:

Parameters make your ai marketing agents reusable. One definition, infinite campaigns.

Step Decorators: Superpowers for Each Stage

Step decorators are where Metaflow shines. Beyond `@step`, you get:

`@retry`: Auto-retry flaky API calls (looking at you, OpenAI rate limits).

`@timeout`: Kill runaway LLM generations after N seconds.

`@catch`: Gracefully handle failures without killing the entire execution.

`@resources`: Request specific CPU/memory for heavy tasks.

`@card`: Generate rich, visual QA reports (more on this below).

Example—robust LLM draft stage with retry and timeout:

If the API hiccups, Metaflow retries. If the LLM hangs, Metaflow kills it. You get resilience without boilerplate.

Building Your Content Automation Pipeline: A Step-by-Step Blueprint

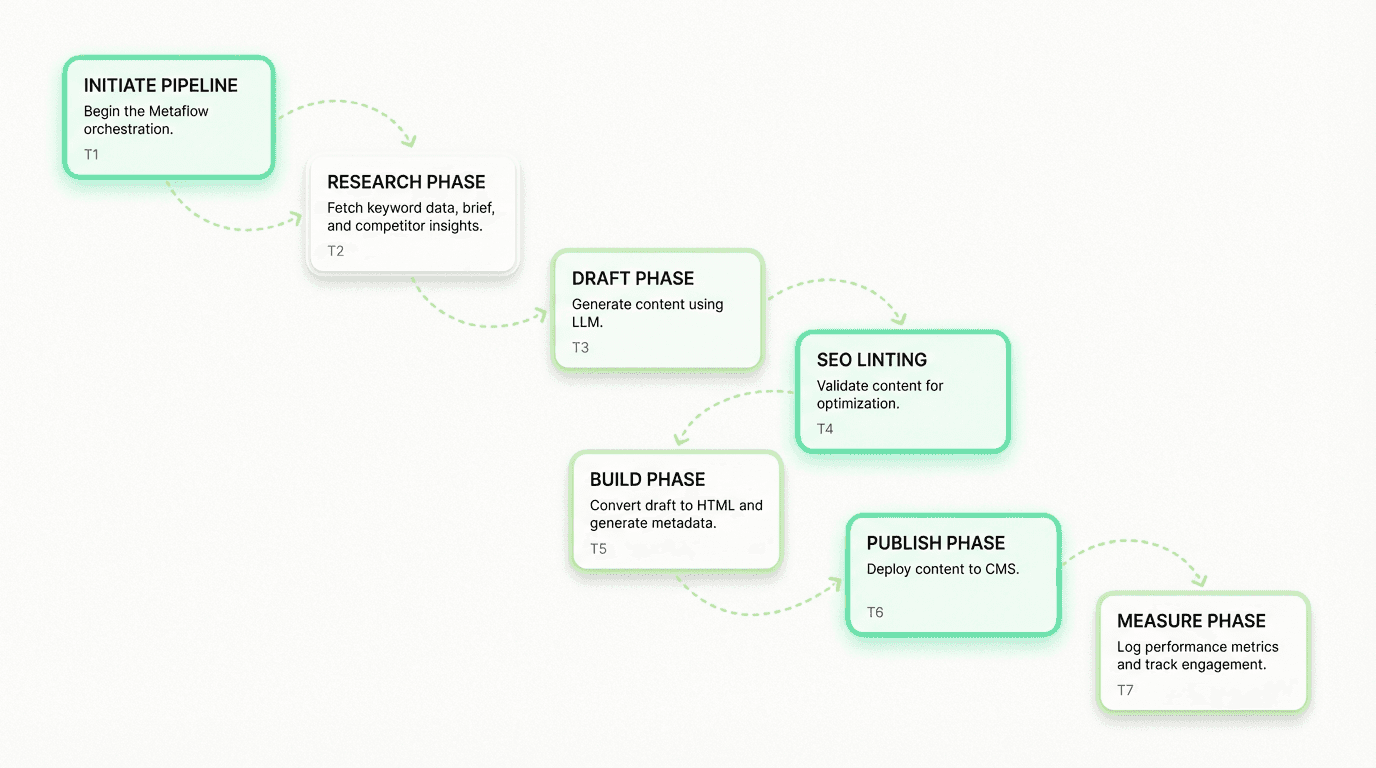

Let's build a real publishing system for AI-powered blog articles. The stages:

Fetch: Pull keyword data, competitor analysis, internal brief.

Draft: Generate long-form articles via LLM.

Lint: Validate optimization—keyword density, readability, meta tags.

Build: Render Markdown to HTML, generate social cards.

Publish: Push to CMS (WordPress, Webflow, headless).

Measure: Log to analytics, set up rank tracking.

Each stage is a `@step`. Each artifact is versioned. Each decision point has a Metaflow card for human review.

Step 1: Fetch Research Artifacts

All research artifacts—`self.brief`, `self.keyword_data`, `self.competitor_content`—are now stored in Metaflow's versioned datastore. You can inspect them weeks later with `metaflow show`. This sets the stage for effective ai workflows for growth by ensuring that all assets are systematically managed.

Step 2: Draft Content with LLM

The `@retry` decorator handles transient API failures. The `@timeout` decorator prevents runaway costs. The draft is stored as `self.draft_markdown`—versioned, reproducible, auditable.

Step 3: SEO Lint and Validation

This is a QA gate. If the article doesn't meet optimization thresholds, execution halts. No bad work reaches production. The `self.seo_report` dictionary is stored—you can debug failures later without guessing.

Step 4: Build Publication Assets

Everything needed for deployment is now packaged in `self.cms_payload`. This is the handoff point between automation and your CMS, and a crucial stage in ai workflow automation for growth.

Step 5: Publish to CMS

The orchestration doesn't just draft—it ships. `self.published_url` is logged. You have a permanent record of what went live, when, and from which run.

Step 6: Measure and Track

Now you have closed-loop attribution. When this post ranks in 30 days, you can trace it back to this exact run—which prompt, which model, which brief version.

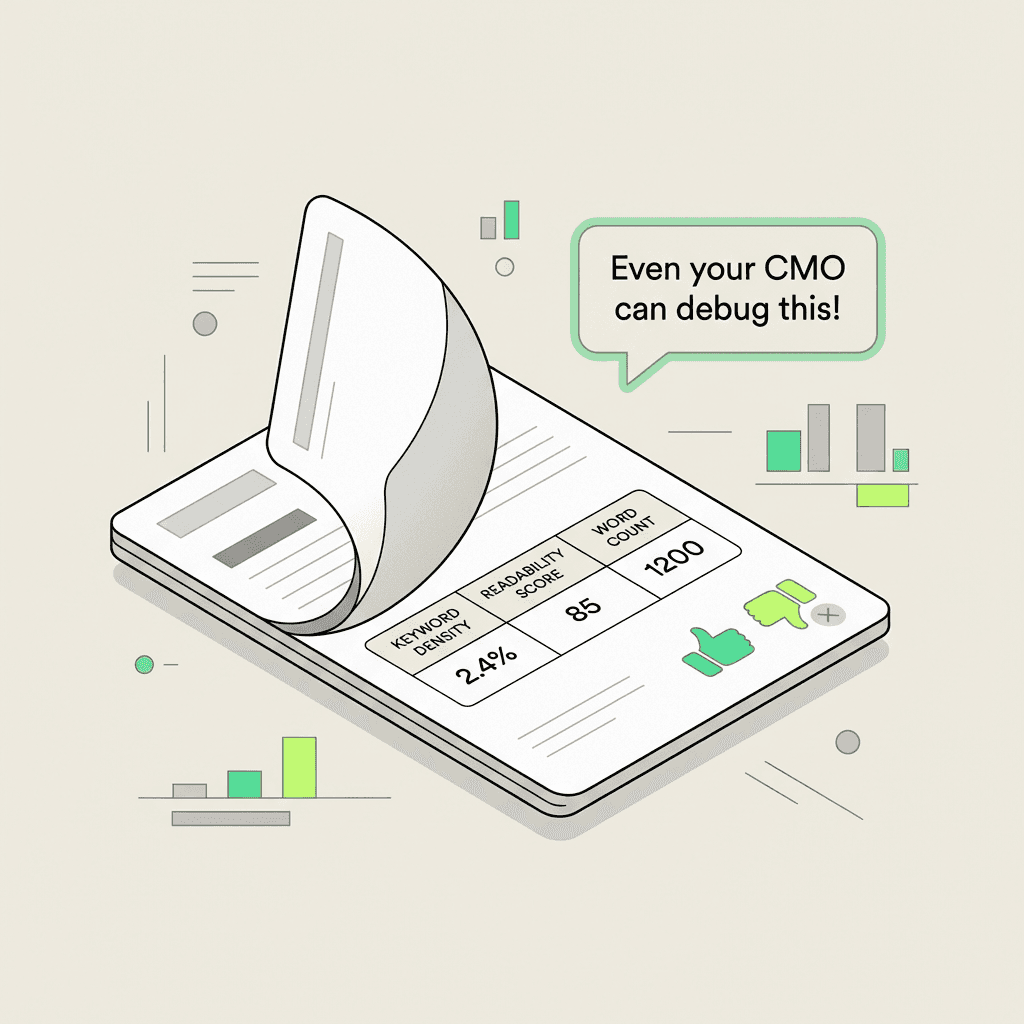

Metaflow Cards: Human-Readable QA Reports

Code is great. But when your CMO asks "why did this post fail validation?" you don't want to `grep` logs. Metaflow cards generate rich, visual reports embedded in the UI for easy debugging.

Add the `@card` decorator to any stage:

Now, when you run `metaflow card view`, you get a browser-based dashboard showing:

Keyword density: ✅ 0.8%

Readability score: ✅ 65 (Flesch-Kincaid)

Word count: ❌ 1,200 (target: 1,500+)

Non-technical stakeholders can review QA gates without touching a terminal. Cards support tables, charts, images, and Markdown—perfect for ai agents for marketing that need human oversight before deployment.

Why This Matters: From Experiments to Production-Grade AI SEO Agents

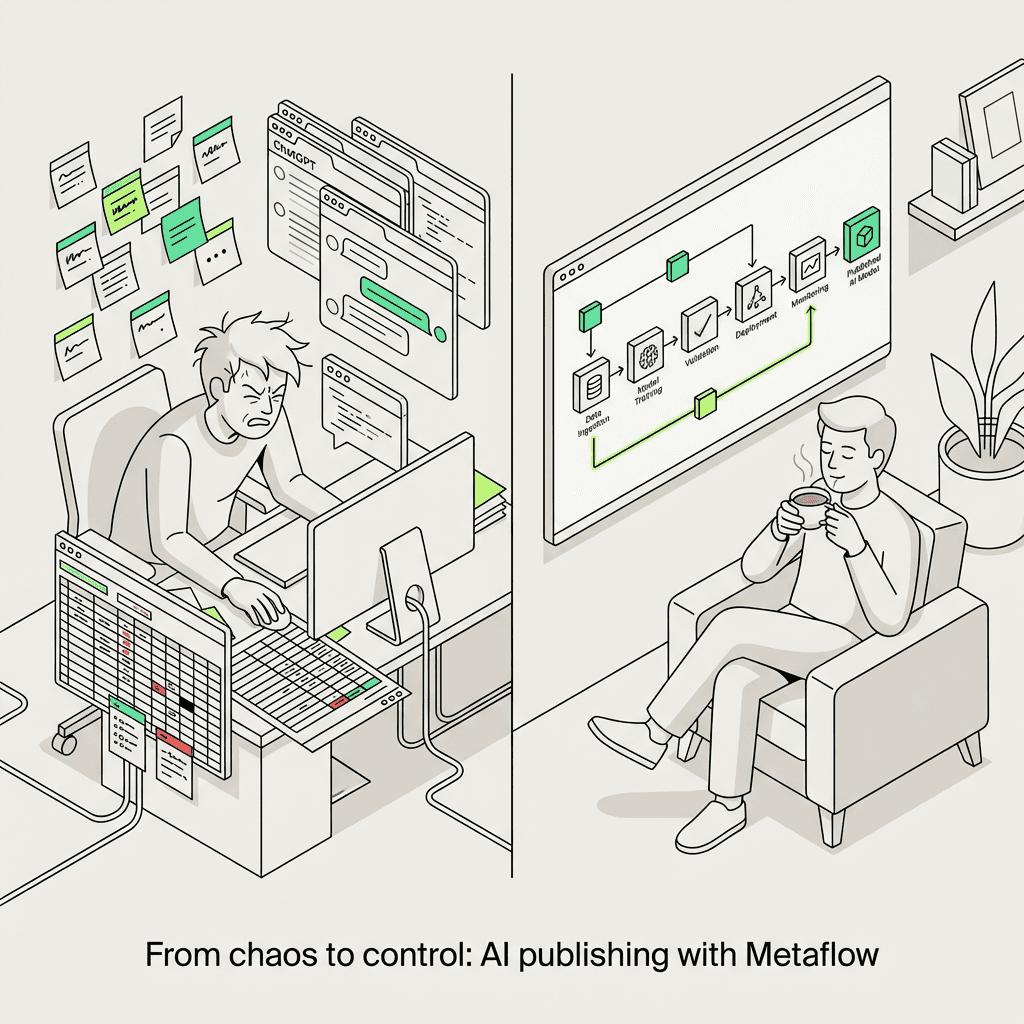

Most teams treat machine learning as a tool—a better text editor. The Metaflow approach treats it as infrastructure. The difference:

Without Metaflow (The Old Way)

Prompts live in Google Docs or Notion.

Someone manually runs them in ChatGPT.

Drafts are copy-pasted into WordPress.

Optimization checks happen... sometimes?

No version control. No audit trail. No reproducibility.

When something breaks, you start from scratch.

With Metaflow (The Production Way)

Prompts are versioned Python code.

**Metaflow Parameter** objects let you A/B test tone, model temperature, and configuration.

**Step decorators** handle retries, timeouts, and error recovery for batch processing.

**Metaflow cards** provide human-reviewable QA gates with visualization.

Every artifact—research, draft, lint report, published URL—is stored in the datastore and traceable.

When something breaks, you know *exactly* where and why through debugging.

When something works, you can reproduce it at scale using the same architecture.

This is the difference between "we use ChatGPT sometimes" and "we have a reproducible, auditable publishing factory"—the hallmark of an advanced ai marketing workspace.

Real-World Use Cases: Where Metaflow Pipelines Shine

1. Programmatic SEO at Scale

Generate 500 location-specific landing pages. Each page is a run with different `primary_keyword` and `location` parameters. Metaflow handles parallel execution, retries, and logging. You review QA cards for a sample, then approve the batch deployment, demonstrating the power of [ai workflows for marketing] (https://metaflow.life/ai-workflows-for-growth-marketing).

2. Content Refresh Pipelines

Identify underperforming posts via Google Search Console. Feed URLs into a system that:

Scrapes current articles

Analyzes SERP changes since publish using data science

Generates updated sections via LLM with training on new trends

Re-lints for optimization

Publishes update with changelog and versioning

Every refresh is versioned. You can roll back if rankings drop.

3. Multi-Channel Publishing One draft, multiple outputs. A single orchestration:

Drafts long-form blog post

Generates LinkedIn summary

Creates Twitter thread

Produces email newsletter version

Publishes all four with tracking links and API integration

Each output is a parallel `@step`. Metaflow orchestrates the fan-out and fan-in using DAG architecture.

4. Compliance and Legal Review

For regulated industries (finance, healthcare), add a manual review gate for MLOps compliance:

The orchestration halts. Legal reviews the draft in a Metaflow card. They approve via webhook. Execution resumes. Zero risk of accidental deployment.

Integrating Metaflow with Your Existing SEO Stack

Metaflow isn't a replacement for your best SEO automation tools—it's the orchestration layer that ties them together through API integration.

Keyword Research

Use Ahrefs or SEMrush APIs in the `fetch_research` stage. Metaflow handles rate limiting and retries for batch processing.

Content Generation

Call OpenAI, Anthropic, or Cohere in the `draft_content` stage. Metaflow logs model version, temperature, and token count as metadata.

SEO Validation

Integrate tools like Surfer, Clearscope, or custom NLP models in the `lint_seo` stage. Metaflow stores validation reports in the datastore.

Publishing

Deploy to WordPress, Webflow, Contentful, or static site generators in the `publish` stage. Metaflow tracks what shipped where using AWS S3 or cloud storage.

Analytics

Log to Google Analytics, Mixpanel, or your data warehouse in the `measure` stage for real-time monitoring. Metaflow provides the `run_id` for attribution tracking.

The beauty: when you swap Ahrefs for SEMrush, you change one function. The architecture stays the same. Your institutional knowledge—how you publish, not what you use—is preserved. This is a key advantage of using a robust ai workflow builder.

Getting Started: Your First Metaflow SEO Pipeline

Ready to build? Here's the minimal viable system using Python:

Run it:

You'll see logs, stored artifacts, and a Metaflow card with your score. From here, replace placeholders with real API calls. Add parameters for tone, model configuration, and CMS credentials. Add `@retry` and `@timeout` decorators for compute resources. Add more QA gates.

Within a week, you'll have a production-grade automation system that your entire team can execute without touching code. For teams wanting more flexibility, a no-code ai agent builder may also be considered as an entry point.

The Metaflow Advantage: Why This Beats Notebooks and Scripts

You could build this with Jupyter notebooks and cron jobs for scheduling. But you'd lose:

Versioning: Metaflow stores every artifact from every run in a datastore. Notebooks overwrite.

Reproducibility: Metaflow captures dependencies, parameters, and environment configuration. Notebooks assume "it works on my machine."

Observability: Metaflow cards provide rich dashboards for monitoring. Notebooks print to `stdout`.

Collaboration: Metaflow flows are Python code in a library. Notebooks are opaque blobs.

Resilience: Metaflow retries and recovers with batch processing. Notebooks crash and ghost you.

For one-off experiments, notebooks are fine. For AI SEO agents that execute weekly, monthly, or on-demand for years with Kubernetes orchestration, Metaflow is the only sane choice.

Conclusion: The Infrastructure Layer for AI-Powered Growth

The future of optimization isn't "humans vs. machine learning." It's *humans with AI infrastructure. The teams that win won't be the ones with the best prompts—prompts are commoditized. They'll be the ones with the best publishing systems*: reproducible, auditable, scalable architectures that turn articles into a competitive moat.

Metaflow is that framework. It's the orchestration layer that makes AI marketing agents production-ready for deployment. It's how you go from "we drafted a blog post with ChatGPT" to "we shipped 50 posts this quarter, tracked performance with data science, identified winners, and scaled them using AWS cloud infrastructure—all without hiring a dev team."

Every stage is versioned. Every artifact is traceable in the datastore. Every decision point has a QA gate. And when something works, you can reproduce it forever using the same Python code and configuration.

This is the infrastructure for every AI workflow described across this knowledge base. Whether you're building programmatic landing pages, automating refreshes with batch processing, or orchestrating multi-channel campaigns with real-time execution, Metaflow is the foundation for ai powered marketing automation.

Start with one post. Turn it into a DAG. Add parameters for experiments. Add QA gates. Add Metaflow cards for visualization. Then run it again. And again. And again.

Welcome to the publishing factory powered by machine learning. It's reproducible. It's auditable. And it's yours.

FAQs

What is a Metaflow pipeline?

A Metaflow pipeline is a Python-defined workflow (a DAG of steps) for running data/ML tasks in a reproducible, observable way. Each step produces artifacts that can be versioned and inspected later, which makes debugging and collaboration much easier than ad-hoc scripts.

What problem does Metaflow solve for AI content and SEO workflows?

It turns fragile, manual “prompt + copy/paste” processes into a production-grade automation pipeline with version control, retries, timeouts, and a clear audit trail. That means you can reproduce the exact research inputs, prompts, drafts, validation reports, and publishing outputs for any run.

What is FlowSpec in Metaflow?

FlowSpec is the base class you inherit from to define your workflow as a set of @step methods connected into a DAG. It acts as the blueprint for execution order, data passing between steps, and storing artifacts from each run.

How do Metaflow Parameters make a publishing system reusable?

Metaflow Parameter objects let you change runtime inputs (like primary keyword, tone, publish date, or brief URL) without changing code. This enables repeatable campaigns, A/B tests (e.g., model temperature or prompts), and batch runs for programmatic SEO while keeping the workflow logic identical.

What do Metaflow step decorators like @retry and @timeout do?

They add reliability controls to individual steps so flaky API calls and long-running generations don’t derail the whole workflow. @retry automatically re-attempts failed steps, while @timeout stops runaway executions to control costs and prevent stuck runs.

What is a good end-to-end content automation pipeline for SEO?

A typical automation pipeline is research → draft → SEO lint/validation → build (Markdown→HTML, metadata) → publish (CMS) → measure (analytics + rank tracking). The key is treating each stage as a testable unit with stored artifacts and explicit QA gates before anything goes live.

What are Metaflow cards and how do they help non-technical reviews?

Metaflow cards are generated, human-readable reports attached to a step that can display tables, charts, images, and Markdown summaries. They’re useful for QA gates like keyword density, readability, word count, and metadata checks so stakeholders can approve or reject outputs without reading logs.

How is Metaflow different from notebooks or simple Python scripts for content automation?

Notebooks and scripts are easy to start but hard to reproduce: they often overwrite outputs, hide parameter choices, and lack consistent observability. Metaflow adds structured execution, artifact versioning, and an auditable history of what changed (inputs, code, parameters, outputs) across runs.

Can Metaflow integrate with Ahrefs, SEMrush, WordPress, or analytics tools?

Yes—most SEO and publishing tools expose APIs, and a workflow can call them during research, validation, publishing, and measurement steps. Metaflow fits as an orchestration layer that coordinates those API calls, logs results, and retries safely when rate limits or transient failures occur.

How do you add compliance or human approval gates to an AI publishing workflow?

Add an explicit review step that blocks publishing until a manual approval signal is received (e.g., from a webhook or internal process). This pattern is common in regulated industries because it creates a documented, auditable checkpoint between generation and deployment—useful whether you implement it with Metaflow triggers or an external approval system.